The TDU is a device that can help transmit visual information via the tongue using electro-tactile signals. The dream of beiong able to restore certain functions of vision via tactile senses is actually a very old one, but one we are only beginning to approach today thanks, in part, to a better understanding of how the brain functions, and also to recent technological adavancements like the miniaturisation of computers and capture devices like web-cams.

The first visual-tactile interface system was developed by Dr. Paul Bach-y-Rita in the 1970's. The original tactile visual sensory substitution device (TVSS) was very large (due in part to the large volume of computers and cameras then) and it stimulated the tactile surface of the back to transmit visual information.

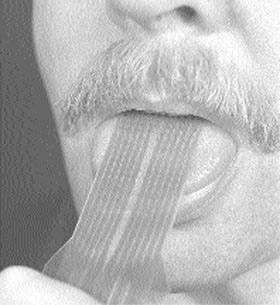

This system was later adapted by scientist (Kurt Kaczmarek, and Paul Bach-y-Rita) at the University of Wisconsin to use the tactile sense of the tongue to transmit the visual information to the brain. For more information please see: http://kaz.med.wisc.edu/Publicity/Synopsis.html

This system was given to the Maurice Ptito laboratory in vision research in 2005 to help develop the system and our understanding of brain plasticity and sensory Substitution. Basically the system that we have is a webacm that is connected to a laptop. The laptop communicates with the tongue device through a wireless bluetooth connection that sends the image that is being capted by the camera in real time to the tongue stimulator aray. This aray consists of a grid of electrodes that can recreate the image with electricity on the tongue. I had the honour of meeting Dr. Paul Bach-y-Rita on the inauguration of the Harland Sanders Chair in visual science. We had very interesting conversations on the nature of sensory substitution and the philosophical implications of brain plasticity. My goal as a reasearcher is to find out if it is possible to use this device for navigation and also what cortical regions will be elicited in this task.

No comments:

Post a Comment